HARMONICS

Background & Rationale

Influences & References

Experiments & Interviews

Prototypes & Testing

Future Direction

Musicians and performers engage with sound through deeply embodied, physical gestures, however the tools available for live visual performance (such as TouchDesigner or Resolume) often rely on interfaces that disconnect the performer from their craft.

This project investigates how physical computing interfaces can bridge that gap, with a particular focus on traditional Malay instruments like Kompang, Rebana, and Gedombak for exploring the relationship between tangible, rhythmic input and generative visual output.

Traditional performers often have vivid visual concepts for their live performances but face significant barriers when attempting to create digital visuals. Current tools demand technical expertise that feels disconnected from embodied performance practice, forcing practitioners to rely on external technicians rather than controlling their own visual expression.

Visual Harmonics looks at what happens when performers can shape visuals the way they already shape sound. The project develops sensor-based prototypes that take cues from traditional instruments like Kompang and Rebana, so performers can control digital visuals through striking, texture and gesture. Instead of asking practitioners to pick up complex software, the work brings technology closer to how they already move and play.

Experiments & Explorations

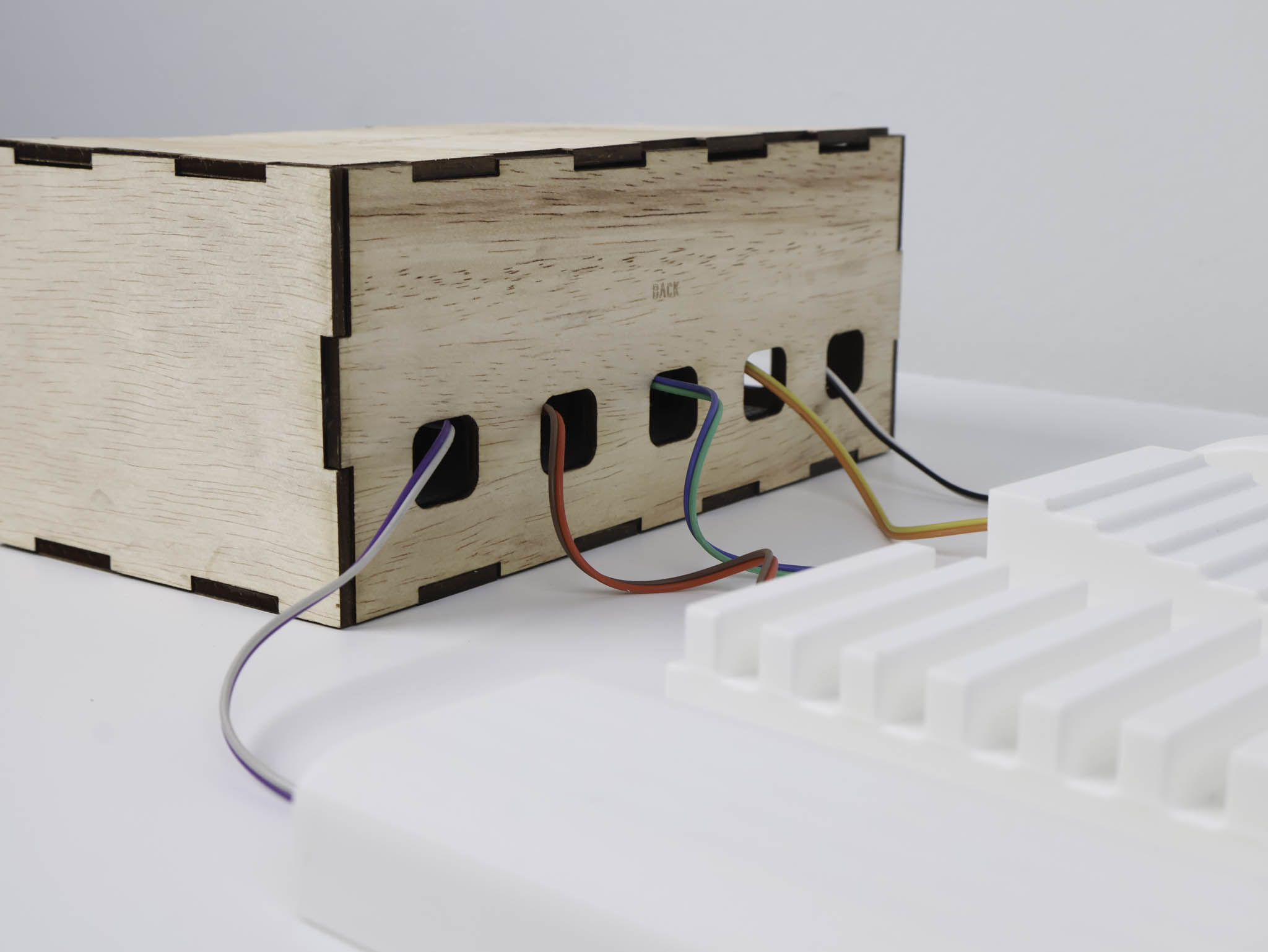

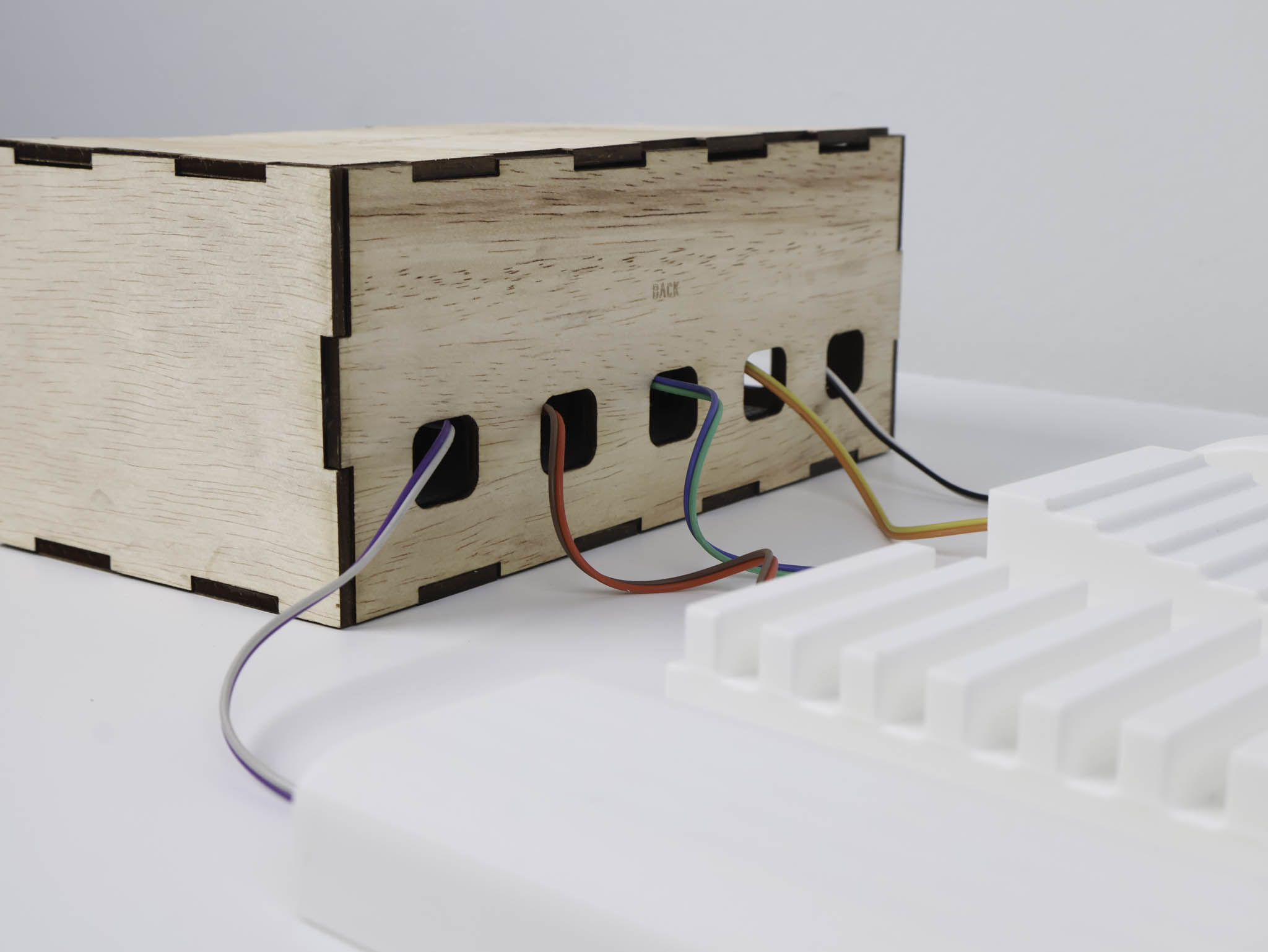

Initial experiments with piezo sensors, force-sensitive resistors, and capacitive touch to detect percussion gestures. Mapping analog signals to digital triggers.

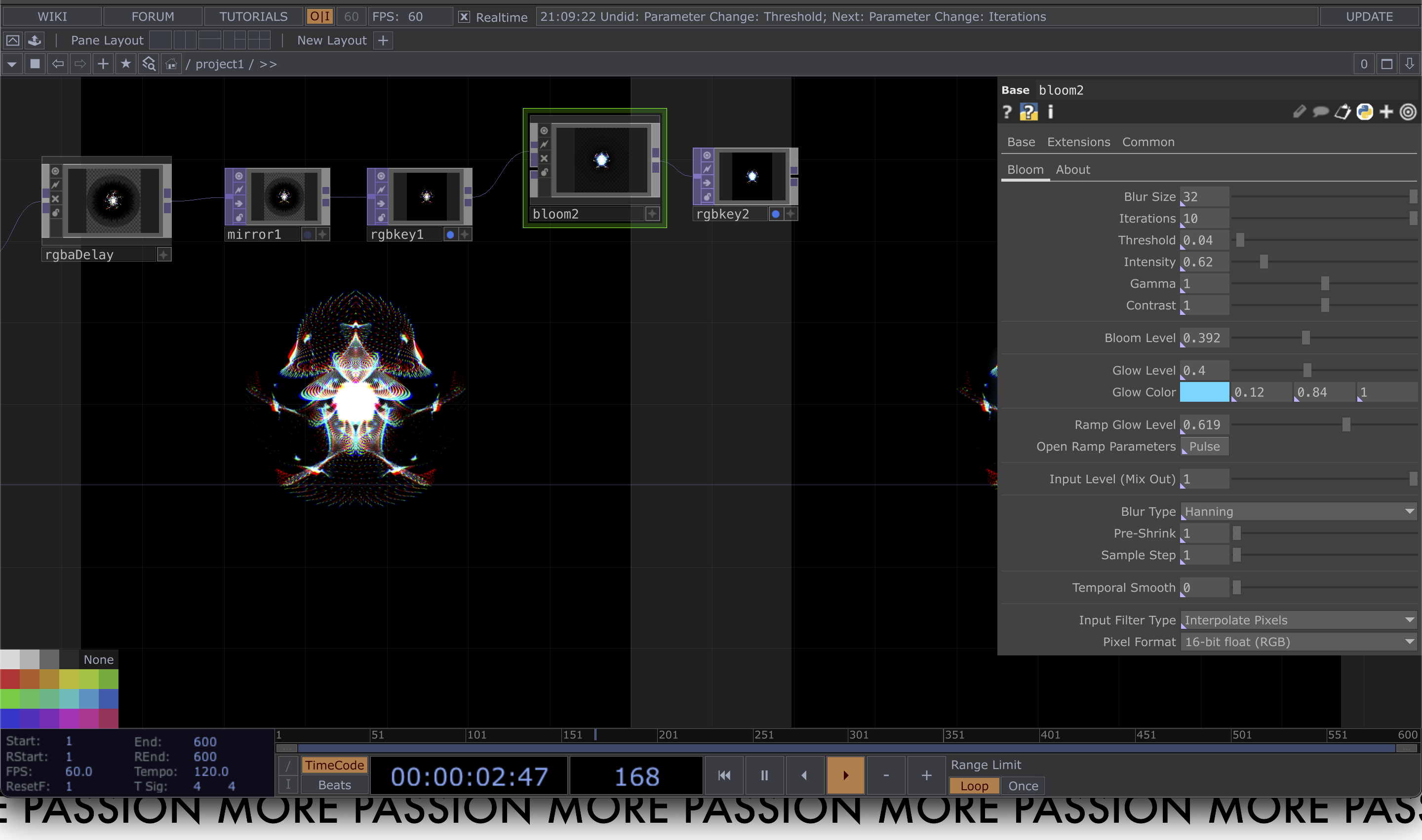

Exploring generative visual systems across p5.js, Blender, and TouchDesigner, developing particle systems, waveform visualisations, and reactive geometries driven by sensor data.

Connecting Arduino-based circuits to p5.js via serial communication. Joystick controllers and piezo-embedded surfaces as input devices for visual manipulation.

User Testing